I am experimenting with a framework to summarize how I observe things that are going on around me, analyzing situations and coming up with solution approaches. Currently this is what I have:

{Desires} × {Problems} × {Mechanisms} × {Devices}

Everything I see can be analyzed as a result of the cross-product (a fancy word for combinations of contents) between these 4 broad categories. To make it easier to remember, they can be factored into 2 major categories:

{Questions} × {Answers}

Where obviously

- [Questions] Desires (objectives) lead to problems (practicalities) to solve

- [Answers] Mechanisms (abstract concepts) hosted by a device (implementation) to address questions

Why the cross product? By tabulating everything I learned or know in 4 columns (categories), I can always select a few of them (subset) and notice a lot of recurring themes (questions) and common solution approaches (answers). This corresponds to an old saying “there’s nothing new under the sun”.

Then what about innovations? Are we constantly creating something new? Yes, we still are, but if you look closely, there are very few ideas that are fundamentally new that cannot be synthesized by combining the old ones (sometimes recursively).

Let me use the framework itself as an example on how to apply this framework (yes, it’s recursive):

- Desires: predict and understand many phenomenon

- Problems: mental capacity is limited

- Mechanisms: this framework (breaking observations into 4 categories)

- Devices: tabulation (cross-products, order reduction)

Feedback as an example:

- Desires: have good outcomes (or meet set objectives)

- Problems: not there yet

- Mechanism: take advantage of past data for future outputs (through correction)

- Devices: feedback path (e.g. regulator or control systems.)

Feedforward as an example that shares a lot of properties as feedback:

- Desires: have good outcomes (or meet set objectives)

- Problems: not there yet

- Mechanism: take advantage of past data for future outputs (through prediction)

- Devices: predictor (e.g. algorithm or formula)

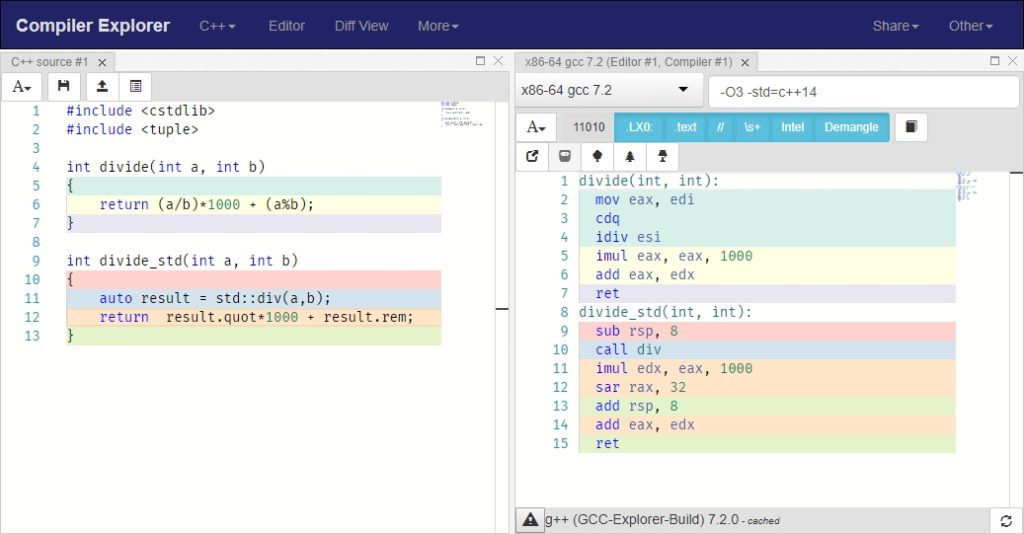

Abstraction as an example:

- Desires: understand complexities (e.g. large code base)

- Problems: limited mental capacity (programmers are humans after all)

- Mechanism: abstraction (generic view grouping similar ideas together)

- Devices: black-boxes (e.g. functions, classes)

Trade as an example:

- Desires: improves utility (utility = happiness in economics lingo)

- Problems: one cannot do everything on its own (limited capacity)

- Mechanism: exchange competitive advantages

- Devices: market (goods and services)

Business as an example:

- Desires: improves utility (through trade)

- Problems: need to offer something for trade

- Mechanism: create value

- Devices: operations

Money (and Markets) as two examples:

- Desires: facilitate trade

- Problems: difficult valuation and transfer through barter, decentralized

- Mechanism: a common medium

- Devices: money (currencies), markets (platform for trade)

Law as an example:

- Desires: make the pie bigger by working cooperatively

- Problems: every individual tries to maximize their own interest but mentally too limited to consider working together to grow the pie (pareto efficient solutions)

- Mechanism: set rules and boundaries (I personally think it’s a sloppy patch fix that is way overused and way abused) and get everybody to buy it

- Devices: law and enforcement

Religion as an example

- Desires: coexist peacefully

- Problems: irreconcilable differences

- Mechanism: blind unverified trust (faith)

- Devices: Deities and religion

Just with the examples above, many desires can be consolidated along the lines of making ourselves better off, and many problems can be consolidated along the lines of we’re too stupid. Of course it’s not everything, but it shows the power of tabulating into 4 categories and consolidating the parts into few unique themes.

I chose to abstract the framework into 4 broad categories instead of 2 because two are too simplified to be useful: since the framework is a way to organize (compactify) observations into manageable number of unique items, there will be too many distinct entries if I have only two categories. Nonetheless, I would refrain from having more than 7 categories because most humans cannot reason effectively with that many levels of nested for-loops (that’s what cross products boils down to).

I also separated desires from problems because I realized that way too often people (manager, clients, customers, government, etc.) ask the wrong question (because they narrowed it to the wrong problem) that leads to a lot of wasted work and frequent direction changes. People are too often asked to solve hard problems that turns out to be the wrong ones for fulfilling the motivating desires. Very few learn to trace it back to the source (desires) and find the correct problem to address, which often have easy solutions that’s more valuable to the requester than what was originally asked. This often leads to unhappy outcomes for everybody that’s avoidable. An emphasis on desires is one of my frequently used approaches to prevent these kind of mishaps.

![]()